Guide to User Testing for HW Products

🛠️ How to run alpha and beta testing on physical devices.

Hey Friends🖐️,

Today we’ll discuss a reliable mechanism for determining if a product will be a hit or a miss after launch. This tool helps clarify if leadership had the right vision, if the product team defined valuable use cases, and if engineering implemented the correct technology. Let’s talk about user trials for physical products.

What Are Trials?

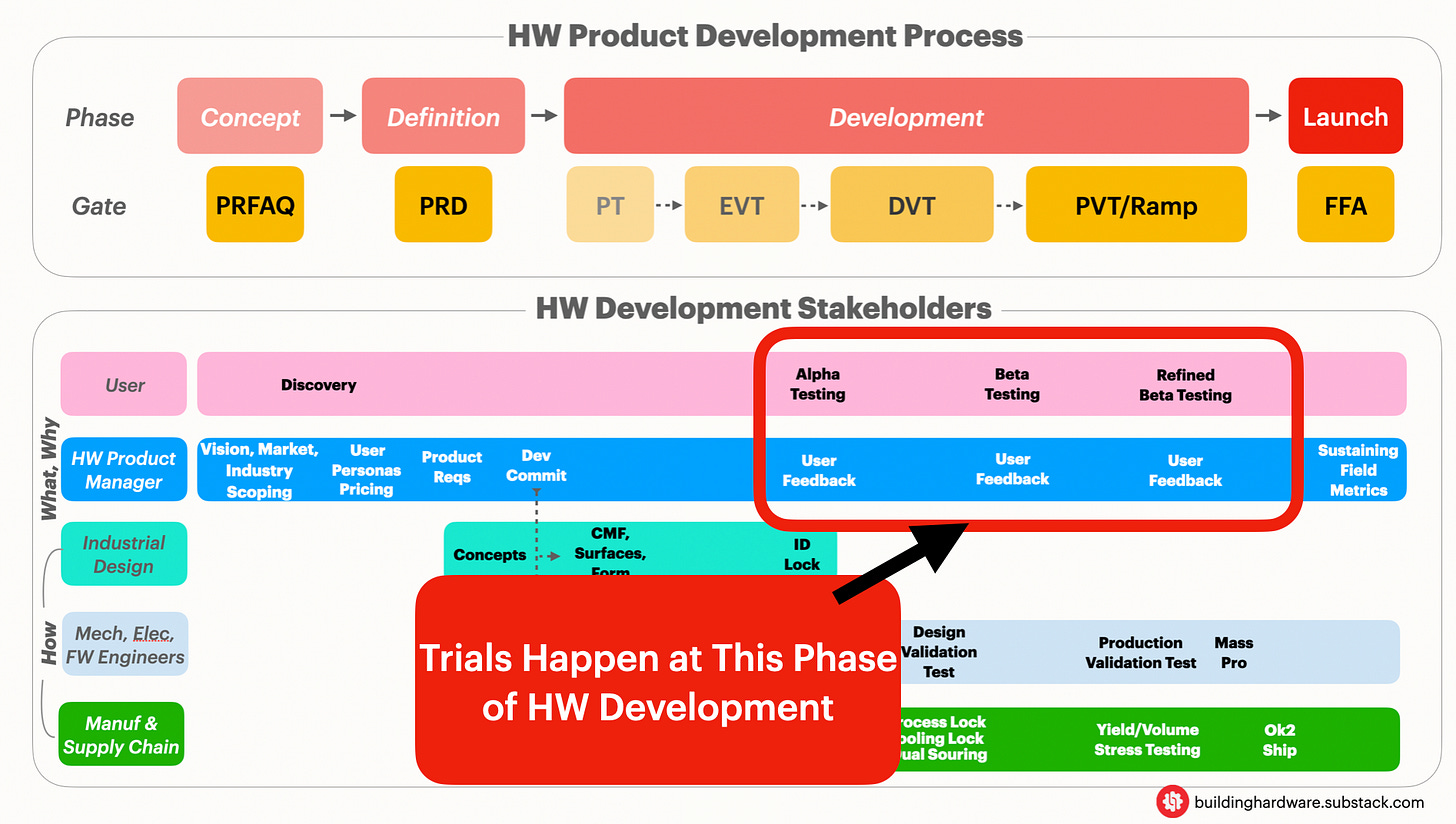

Trials are structured phases of product testing where a device is put into a customer’s environment before launch.

They validate if the device fulfills the product requirements. These are different from engineering builds (EVT, DVT, PVT) in the sense that they focus on user feedback, as opposed to technical feasibility (reliability, lab tests, certs, etc). However, it should be noted that there is some overlap in both.

Trials are where a product manager can shine by ensuring customer needs are properly understood and the product can delight in a real world scenario. Why PMs specifically? Well because the role of the HW PM is to ensure the product is financially feasible, technically viable, and user desirable. Most of all, their role is to validate that a product solves genuine user problems.

There are generally three trial phases that happen during a typical ⚙️hardware product development process.

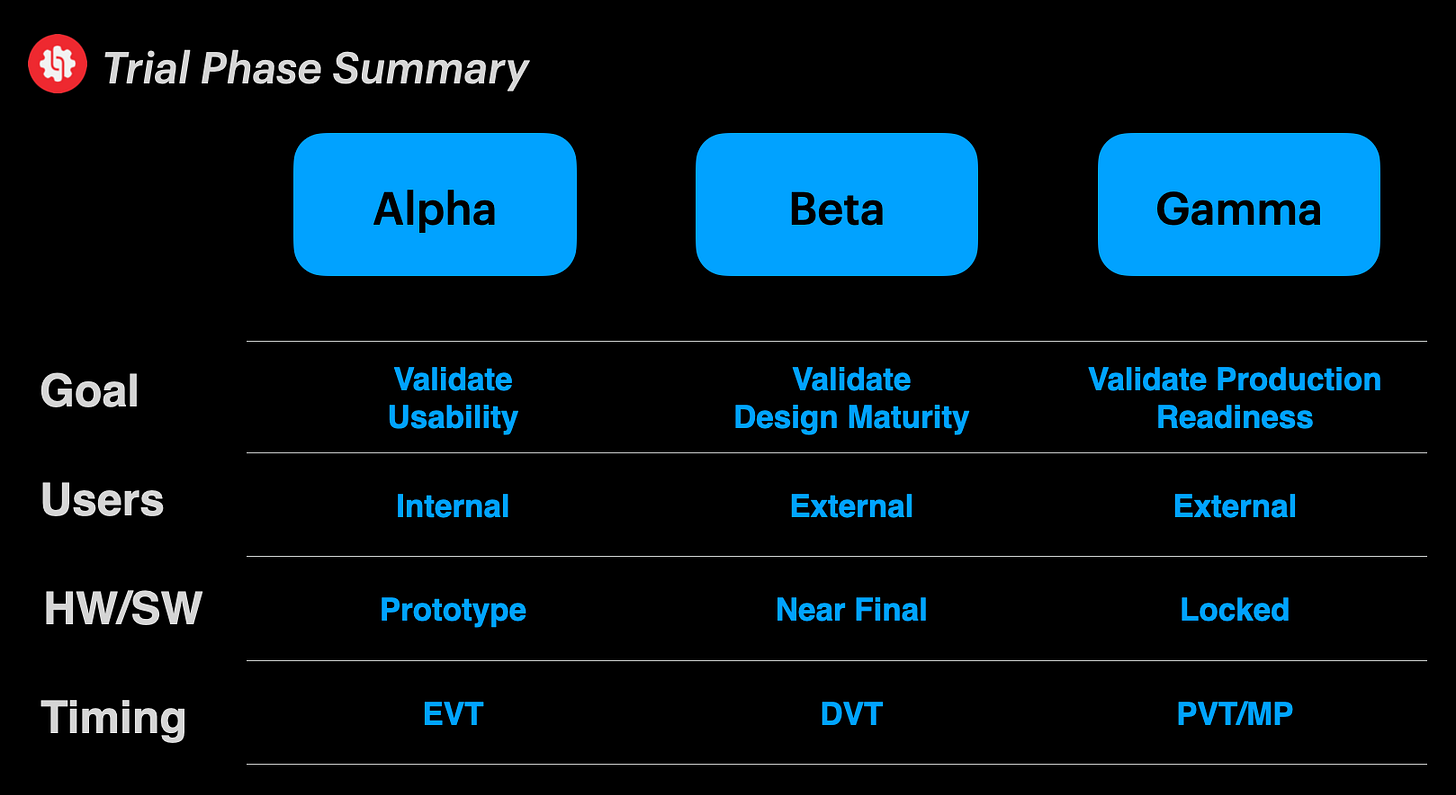

Phase 1: Alpha

Timing: Coincides with EVT builds.

HW Maturation: Prototypes with reliability gaps on the hardware side and basic, but core functionality. However, the devices should be fully functioning at a system level.

SW Maturation: Early builds with high bug rates, incomplete UI/UX, and initial functionality.

Users: Internal employees in office or lab settings who are more forgiving as they know the product isn’t mature.

Goal: Catch obvious non-conformities, validate usability, and deliver feedback to design/engineering.

Phase 2: Beta

Timing: Coincides with DVT builds.

Hardware Maturation: Design should ideally be finalized, as these units will go for certification testing.

Software Maturation: Near feature complete with room for tuning product scalability.

Users: Select group of customers in real world environments. These users have a lower tolerance for issues and expect higher quality experiences than employees.

Goal: Validate design maturity with unbiased feedback.

Phase 3: Gamma

Timing: Coincides with PVT builds.

Hardware Maturation: Design should be locked, ready for mass production ramp.

Software Maturation: Production release candidate that is stable and optimized for performance.

Users: Customers in real world environments, similar to beta.

Goal: Validate if the product is ready to be shipped at scale (functionally, operationally, logistically, and with all support systems intact).

🤔Interested in learning 0-1 HW Product Management?

Our readers have been requesting a practical guide to taking a hardware idea from concept to mass market.

We’ll be covering principles including HW product sense, business feasibility, go to market planning, user desirability, and technical viability.

Our goal is to help beginners or even experienced product makers sharpen their craft. Drop your email below for access to initial modules and early bird pricing.

Why Do We Need Trials?

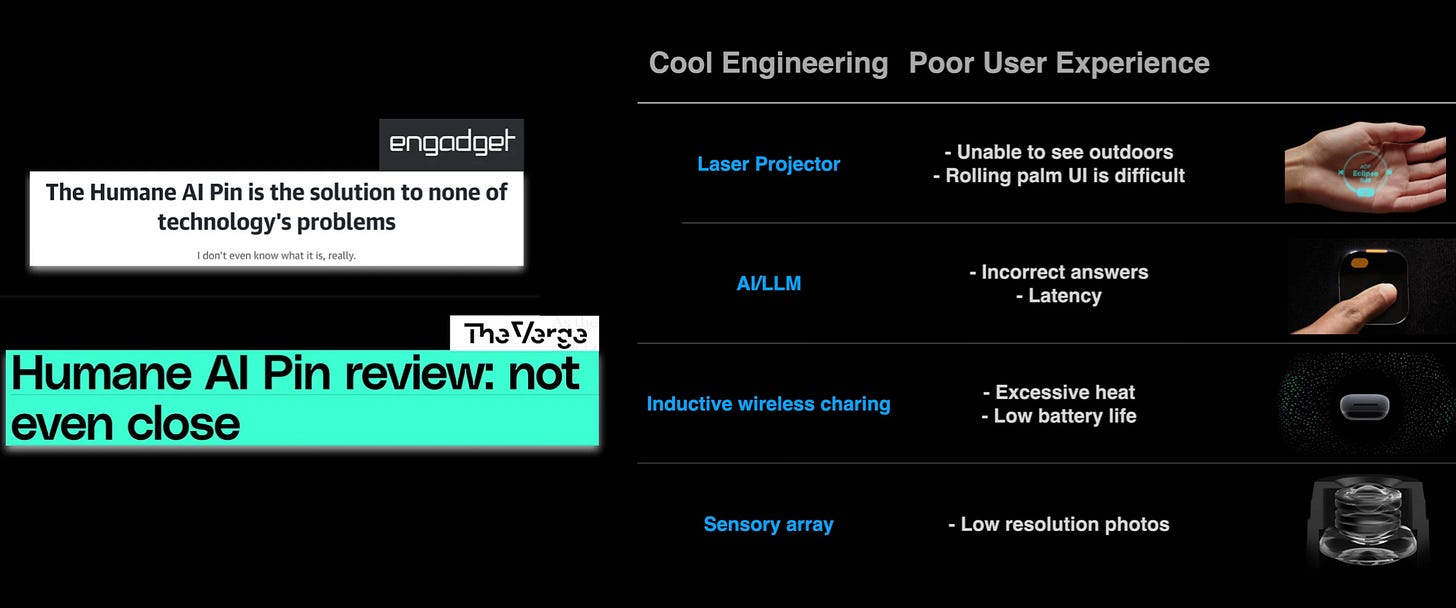

Because they prevent bad products from being shipped. I’ve talked in an earlier post about how Humane’s AI pin was a disaster. In my humble opinion, the negative press could’ve been avoided if they had product managers actually advocating for desirable user experiences.

Humane’s PMs should’ve used trials as a mechanism to block inadequate features or ideas, even if the engineering team pushed them. Even if the technology seemed cool.

Alpha and beta testing should have raised red flags regarding issues like overheating, slow AI responses, low-resolution photos, and poor projector visibility.

What about some examples of alpha, beta trials being done properly? Check out the two products below, which were discontinued before shipping.

On the left we have Amazon’s home drone camera, which seemed really cool but noisy motors and flight issues reported by early users prevented launch. On the right we have Apple’s AirPower mat, which resulted in thermal issues coming from a complex multi-coil design.

Both companies made the costly but right decision not to ship these devices into production.

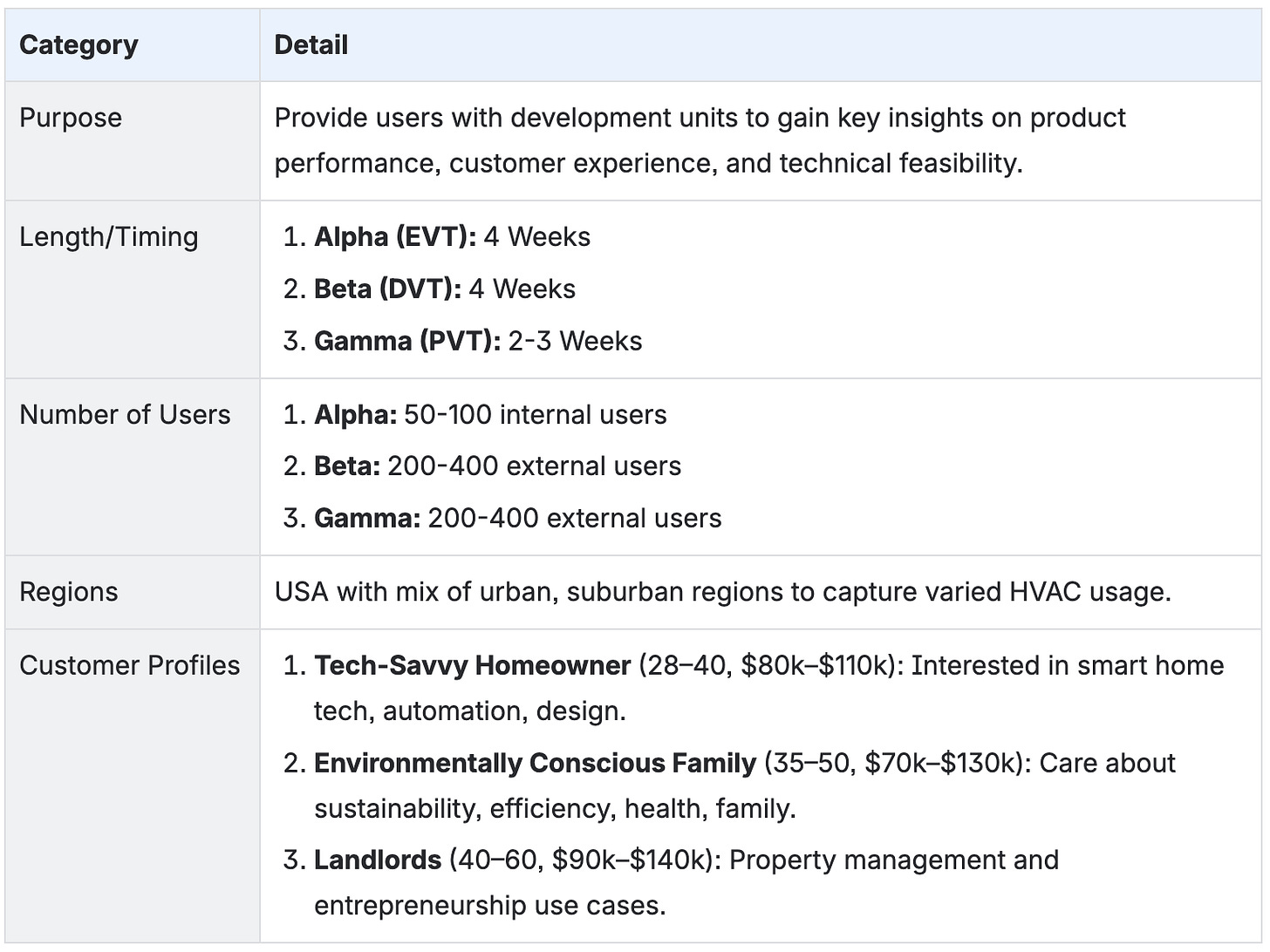

Example: How To Perform Trials

Imagine you’re a product manager at a smart thermostat company, designing your flagship product, the HeatSync Pro. It’s a premium smart thermostat that combines climate intelligence with an elegant design.

In earlier posts, we talked about how to develop a 💰 financial model and 🚀 product requirements for this thermostat. Now, let’s discuss how we can design user testing to validate assumptions and gather key feedback.

Overview

Let’s start by documenting a summary of the plan. Some general tips:

Because this is HW product development, customers need to feel, interact with, and physically use your product. Remember to take your time when planning these trials. The learnings can be exponential and help de-risk major issues post-launch.

Always account for extra quantities when planning builds with your contract manufacturer.

Our example only lists the US as a region, but ensure you test in multiple countries if you plan to sell there.

For example, once I was close to launching a product, but alpha testing in a country we hadn’t accounted for revealed a major design flaw. The device wasn’t compatible with certain power outlets. Our team assumed it would be, and we were wrong. This saved us from a lot of negative press after launch.

Trial Overview Table

Test Plan

Next is the test plan. This is the meat and potatoes of your trials. Write a clear strategy for how core product requirements will be validated, with specific instructions for customers. Some tips are below:

Test as early as possible, especially with hardware products. Pick your top features in case you need to front load development. This is because the later in a program design flaws are found, the more exponential the cost is to change them.

To prioritize features, look for those that are hardware specific, introduce new technologies, require new designs, or most importantly, are the ones that customers value. Test both in parallel but if a priority call needs to be made, software issues can be mitigated later in the program at a lower cost than hardware flaws.

In our thermostat example, this would mean testing themes around air quality sensing, energy savings, and smart temperature routines.

Also, note to spread out activities. Trials are usually in 4 week chunks. Putting everything in one week will overburden users, as they will take the path of least resistance. This friction will prevent your team from getting reliable data.

Test Plan

A hypothetical test plan is shown below.

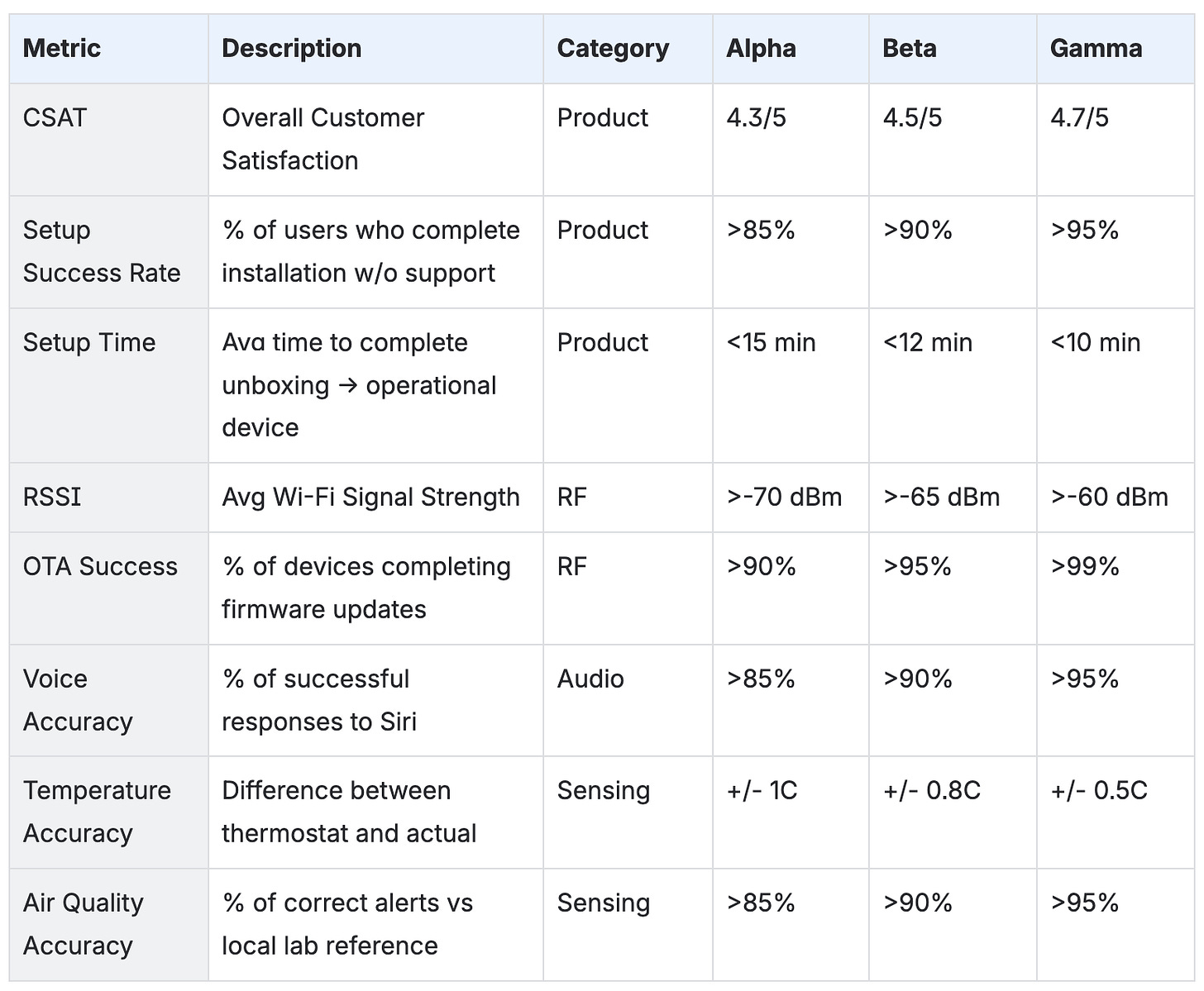

Metrics

The saying “you can’t improve what you don’t measure” holds true. The next step is to quantify each trial’s performance. While most items can be monitored through dashboards such as signal strength, temperature accuracy, and sensing, other subjective metrics should be captured through surveys or other feedback mechanisms.

These include things like impressions of packaging and install experience. To some these may seem trivial, but consider every step of the customer’s journey. Everything from the inserts in the cardboard of the packaging to the type of fonts used on the branding is a reflection of your product.

One metric to capture overall impressions and product satisfaction is CSAT (Customer Satisfaction). Some companies use star ratings while others use NPS (Net Promoter Score). Whatever it is, try to set a high target in trials because once it’s in the market, customers will be more unforgiving. It’s better to aim high in a controlled environment and miss than get low customer satisfaction scores post-production.

Lastly, have realistic progressions for these metrics with each phase of the trial to help development teams. Below is a partial list of sample metrics for our thermostat example.

Incorporating Feedback

As highlighted above, one of the reasons for running trials is to fix product or design flaws. But what does that really look like? Below are some hypothetical examples.

Example 1: Audio Clarity

Problem: 30% of users during alpha trials report that the thermostat’s speaker is too quiet for voice prompts and alerts, especially in larger or noisier rooms. Some describe muffled audio when standing more than 6 to 8 feet away.

Mitigations: Below are some mitigations your engineering teams might explore for the next build (DVT) and trial (Beta). They will need to be balanced against product cost, value to users, dev effort, and time to market.

Amplifier: Integrate a higher rated audio amplifier to boost output power without significant heat or efficiency loss.

Speaker Placement: Move the speaker module to the sides of the device instead of the back. This provides less obstruction for the driver and clearer output.

Firmware: Increase the default alert volume, add dynamic range adjustments, and provide user volume calibration during setup.

Speaker Upgrade: Evaluate higher sensitivity speaker drivers (dB SPL) to achieve greater loudness.

Example 2: Difficult Install Experience

Problem: 20% of Beta testers struggled with mounting as the adhesive didn’t stick well on textured walls. This prevented the team from achieving the average setup time metric, as it was greater than 12 minutes.

Mitigations:

Improve Setup Guides: Clarify in the app and on the physical booklet to better prep the textured surfaces for adhesive readiness.

Improve Adhesive: Implement an adhesive with a stronger bond to textured surfaces or increase the number of adhesive strips.

Different Mounting Tech: Design an additional mounting plate with screws to augment the adhesives.

House keeping

Lastly, there’s some admin work for managing trials, and I didn’t want to overlook it, as I find that product and tech makers usually take such tasks for granted. But details matter.

Recruiting

When finding users for your trial, you might need to keep an incentive structure to motivate them. These can vary from gift cards to post trial discounts on production models. Also ensure that users fit your target demographic by using screening criteria. For our example it would be things like customers who are in homes (not apartments) for access to their HVAC, consent to providing setup photos, being iOS users, and availability to submit data every 5 days. Also, ensure that there are NDAs, as one of the keys to delighting end customers is the element of surprise. This can only be done if there are no leaks.

Recruitment Email

Make a concise, attention grabbing email that excites potential users for trial participation. Highlight how their time will help your product, compelling parts about the mission, required commitments, incentives, and a sign up form. Provide regular, accurate updates and be quick to respond to questions.

User Instructions

This may seem obvious, but include simple, easy to follow instructions on each week’s trial activity. Include pictures and SOPs. The harder they are to understand, the less meaningful the data will be for product insights.

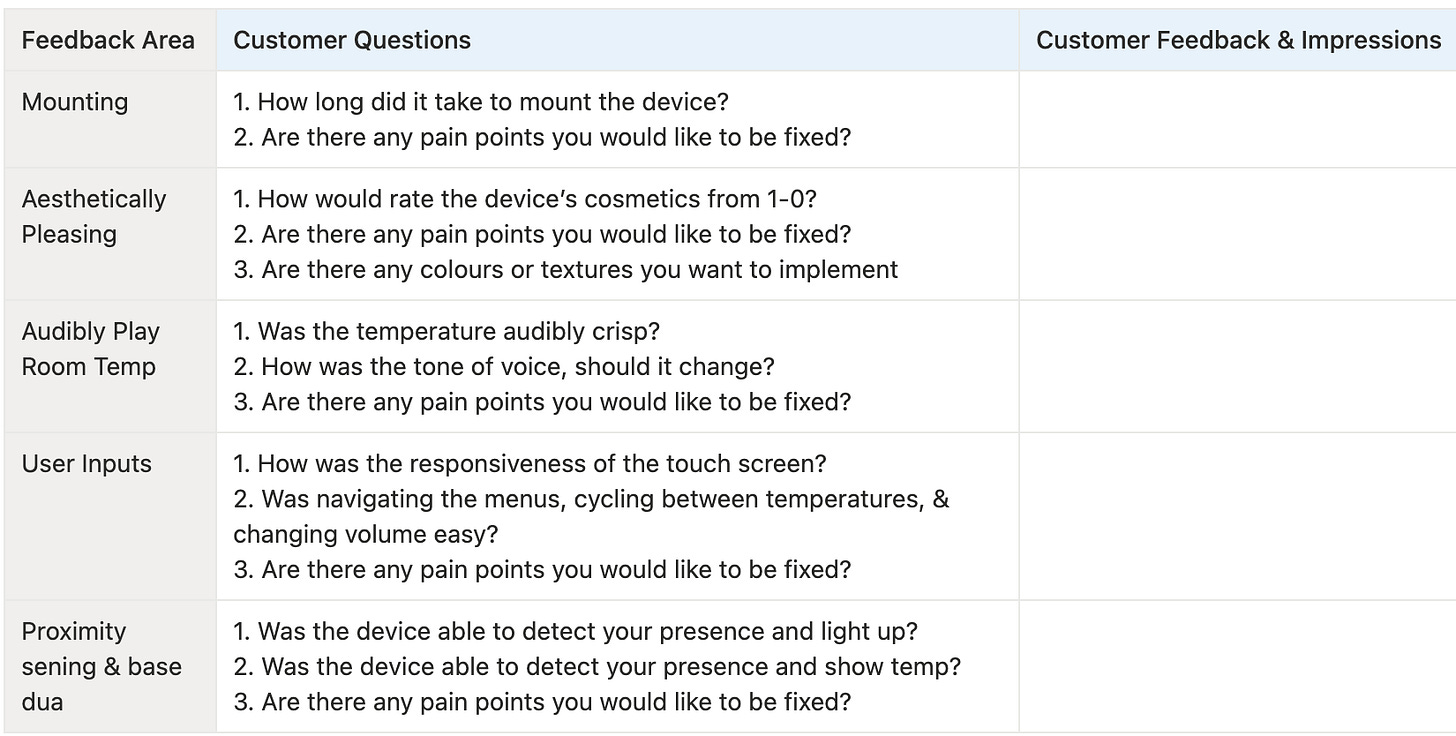

Feedback

We made a test plan in the section above but some may be wondering how we get feedback from customers? Typically, PMs use standard trial homework templates like the one below. It breaks down user tasks into a format where feedback can be recorded. Use a proper platform rather than a simple survey to track versions, timestamps, photos, and device metadata.

That’s all for now. How does your team run user testing for physical devices?

Thanks for reading

Make sure you check out some other articles: